The Silicon Photonics Arms Race: Lightmatter’s Optical Gambit

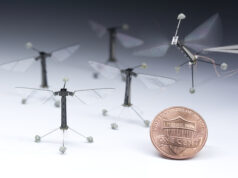

In the neon-lit battlefield of AI hardware, where every chipmaker is foaming at the mouth for more speed, Lightmatter has unleashed its latest weapon: the Passage M1000. A silicon photonic interposer designed to obliterate bandwidth constraints like a sledgehammer through glass, this bad boy is set to ship as early as summer.

Now, if you don’t speak the strange and mystical tongue of photonics, let’s break it down. The M1000 doesn’t just shuffle data between chips—it beams it through a microscopic light show, blasting bits and bytes at speeds that would make your gaming rig weep. This is no mere evolutionary step; it’s a full-scale cybernetic mutation in computing.

Death to the Bottlenecks

Lightmatter’s approach is a simple one: instead of cramming more wires into ever-tighter spaces, why not use light to move data? The M1000 sits between compute dies like a high-tech middleman, directing traffic with surgical precision. Data moves vertically across the entire chip, creating a bandwidth utopia where information flows freely and silicon heats up only from the sheer, unrelenting force of progress.

Each M1000 tile spits out a monstrous 14.25 TB/s of aggregate bandwidth—more than enough to make traditional electrical interconnects look like they’re crawling through molasses. If that’s not enough, Lightmatter has its sights set on even bigger game.

The Future: Faster, Smaller, and Even More Insane

By 2026, Lightmatter plans to roll out the Passage L200 and L200X, two optical juggernauts boasting bidirectional bandwidth of 32 Tb/s and 64 Tb/s respectively. To put that in perspective, their competition—Ayar Labs—is barely managing 8 Tb/s. It’s like bringing a machine gun to a knife fight.

For the engineers out there foaming at the mouth, here’s the nerd candy: the M1000 uses 56 Gb/s NRZ modulation, wave division multiplexing, and supports eight wavelengths per fiber, neatly stacking up to 56 GB/s per channel. The L200X takes it up a notch with 112 Gb/s PAM4 SerDes. If these numbers don’t get you excited, you’re probably in the wrong line of work.

The Heavyweights Join the Fray

Lightmatter isn’t just carving out a niche—it’s throwing haymakers in a full-blown heavyweight fight. Nvidia, Intel, Broadcom, and Ayar Labs have all dipped their toes into the optical waters, but Lightmatter is diving in headfirst.

Meanwhile, across the government’s secretive tech corridors, DARPA just threw $45 million at AI chipmaker Cerebras and co-packaged optics vendor Ranovus to integrate this tech into its own monstrous wafer-scale AI systems. Read more about the DARPA initiative here.

Why This Matters (And Why You Should Care)

If you thought AI was already moving fast, buckle up. The ability to shuttle data at petabit speeds means that the next generation of GPUs and AI accelerators will crunch numbers at speeds that make today’s top-end hardware look quaint.

Whether you’re training massive neural networks or running high-fidelity simulations, bottlenecks are about to become a thing of the past.

For those still clinging to traditional copper-based interconnects, consider this your wake-up call. The future is photonic. And Lightmatter? They’re leading the charge. Explore Lightmatter’s technology here.

Bootnote: As with all great technological revolutions, expect resistance, chaos, and a fair share of nervous hand-wringing from the old guard. But one thing is certain: the age of optical dominance is upon us. Whether you ride the wave or get left behind is entirely up to you.